Nitzan Orr

Hello, my name is Nitzan. I recently graduated with a Master's in Computer Science, and I am on the job market!

Hello, my name is Nitzan. I recently graduated with a Master's in Computer Science, and I am on the job market!

As part of my research, I iteratively tuned a robotic manipulator using ROS to achieve human-level paint sanding performance, and measured the results using computer vision (OpenCV), culminating in a co-authored paper (see Publications). Separately, in a robotics course, I developed precise and smooth robotic manipulator control using ROS for a math equation solving system, attaining 60 keystrokes per minute. In the research lab, I developed a robot sensor data recording tool in Python which synchronized data from on-board and off-board sensors. A complementary data visualization tool I made with Javascript React improved the lab's ability to analyze navigation and manipulation algorithms. I also explored my side interests in improving situational awareness for people who monitor or control remote robots by training a Neural Radiance Field (NeRF) model and doing literature reviews on the subject.

During Summer 2022 I worked at the NASA Ames Research Center in Mountain View, CA. As part of the Astrobee Team within the Intelligent Robotics Group (IRG), I worked on improving the map visualization pipeline. Astrobee, a family of cube-shaped robots deployed in the International Space Station (ISS), mapped several ISS modules which we wanted to make accessible to certain stakeholders. However, the data was high resolution and couldn't be rendered in whole on the web. My job was to partition the 3D model into smaller chunks, at varying resolution levels, so that we could populate a Level-of-Detail Octree (3D Tiles). The work and environment were rewarding, and I enjoyed learning about the inner workings of 3D models and texture maps.

You can access some of the code I wrote here where I used the Blender Python API to automate cropping meshes and reducing texture sizes. The user interface is called ISAAC, not to be confused with my previous project ISAACS.

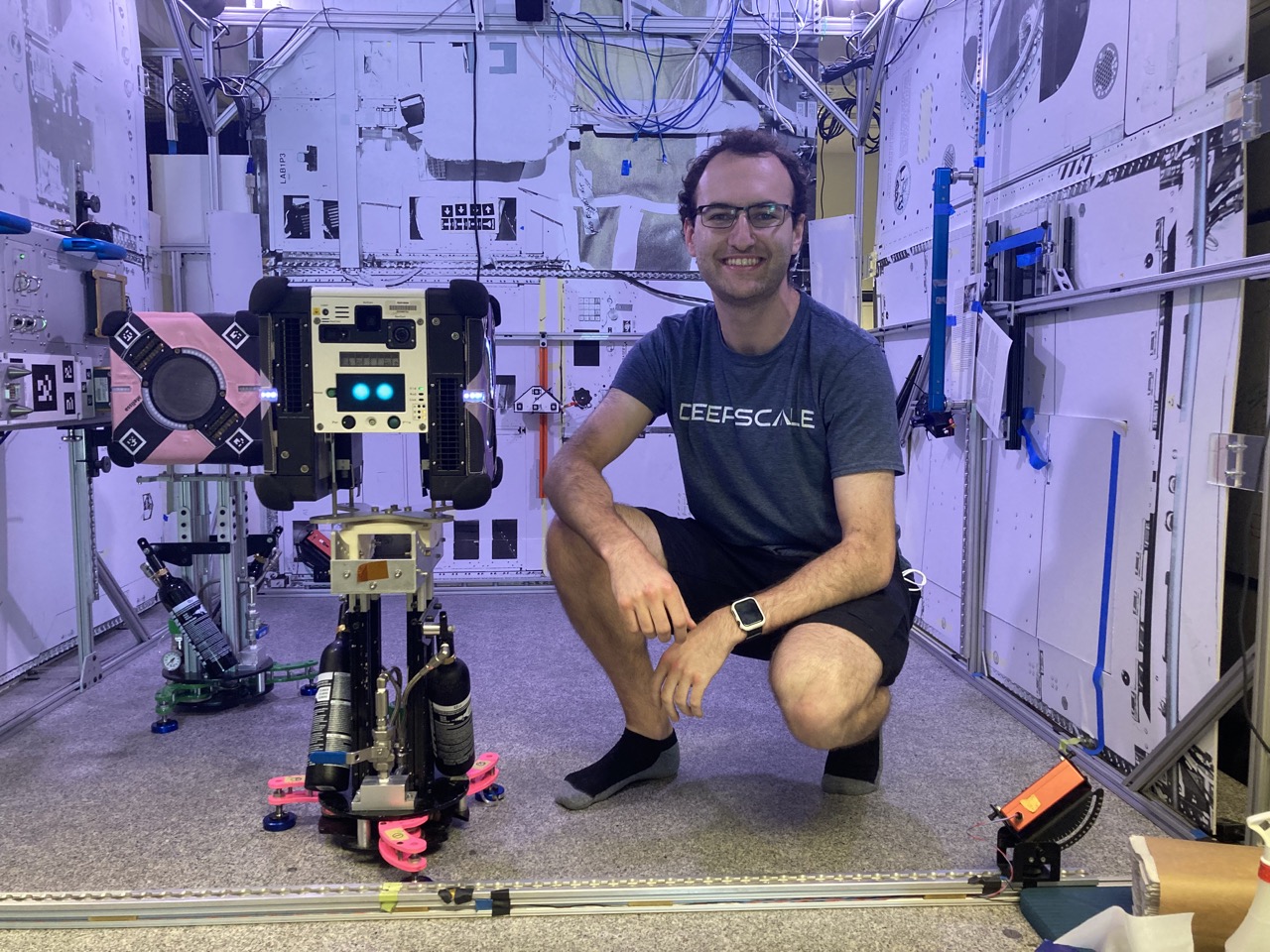

Me with two of our cube-shaped friends hovering millimeters above the zero-friction granite top (them, not me)

I co-led a team of undergraduate researchers developing ISAACS, the Immersive Semi-Autonomous Aerial Command System. We remotely operated drones for mapping, radiation detection, and more all through virtual reality. ISAACS integrates flight controls and real-time sensor data visualization into a single interface. Our paper was published at the IEEE Nuclear Science Conference (see "Publications"). I was advised by Dr. Allen Yang & Prof. Kai Vetter. ISAACS GitHub

I worked during the school year at an autonomous indoor vehicles startup on vision applications for robot movement calibration. Not only did I gain knowledge about mapping, autonomous navigation, and sensors but also saw what a culture of inclusiveness looks like. Friendly Robots Company

At DeepDrive I designed and wrote the ROS software that connected all components of our autonomous RC car -- from the camera input to the neural network inference, and all the way down to the PWM (Pulse Width Modulation) motor commands. The car is equipped with an Nvidia TX2 and interfaced with an Arduino and ZED 1 depth sensor. Once the car was up and running I collected training data and trained our Pytorch implementation of SqueezeNet, an efficient CNN. I was advised by Dr. Karl Zipser & Dr. Sascha Hornauer. Autonomous RC Car GitHub

Konstant A, N Orr, Hagenow M, Gundrum I, Hen Hu Y , Mutlu B, Zinn M, Gleicher M, Radwin R. "Human–Robot Collaboration With a Corrective Shared Controlled Robot in a Sanding Task." (2024). Human Factors

Konstant A, N Orr, Hagenow M, Senft E, Gundrum I, Mutlu B, Zinn M, Gleicher M, Radwin R. "Human-Robot Collaboration in a Sanding Task." (2023). In Proceedings of the Human Factors and Ergonomics Society Annual Meeting

Hagenow M, Senft E, Orr N, Radwin R, Gleicher M, Mutlu B, Losey D, Zinn M. "Coordinated Multi-Robot Shared Autonomy Based on Scheduling and Demonstrations." (2023). IEEE Robotics and Automation Letters

Orr N*, Dayani P*, Thomopoulos A*, Saran V, Krishnaswamy S, Zhang E, Hu N, McPherson D, Menke J, Yang A, Vetter K. "Immersive Operation of a Semi-Autonomous Aerial Platform for Detecting and Mapping Radiation." (2020). IEEE Nuclear Science Symposium and Medical Imaging Conference

Mehr N, Sanselme M, Orr N, Horowitz R, Gomes G. "Offset Selection for Bandwidth Maximization on Multiple Routes." (2018) American Control Conference.

Click on the images to enlarge them.